Univariate/Descriptive Statistics & Normality

What are Univariate/Descriptive Statistics?

There are various ways to call a number of univariate statistics in

R. As social scientists, the main univariate statistics we are concerned

with are the mean, median, standard deviation, minimum, maximum, and

range. R comes with a stock function, which, unfortunately, does not

provide some measures. Therefore, we use the describe function from the psych package (or the

univ.desc function from the vannstats

package). We can call univariate statistics for both the full data set

and a specific variable.

First, let’s load the packages as libraries

And create the data1 object out of the

Defendants2025 data.

Descriptive Statistics

For the full data set, we can call univariate statistics as such…

## Description of data1##

## Numeric

## mean median var sd valid.n

## id 869.50 869.50 251865.17 501.86 1738

## age 35.39 34.00 129.01 11.36 1738

## priors 1.63 1.00 1.74 1.32 1738

## risk_score 4.51 4.35 7.75 2.78 1738

## bail 22572.21 20000.00 262445703.92 16200.18 1738

##

## Factor

##

## race latine black white asian other

## Count 817.00 435.00 382.00 70.00 34.00

## Percent 47.01 25.03 21.98 4.03 1.96

## Mode latine

##

## race_binary non-white white

## Count 1356.00 382.00

## Percent 78.02 21.98

## Mode non-white

##

## charge Property Crime/Theft Assault/Battery Domestic Violence/Child Abuse Drug-Related

## Count 661.00 342.00 239.00 219.0

## Percent 38.03 19.68 13.75 12.6

##

## charge Weapon Possession Vehicle-Related

## Count 206.00 71.00

## Percent 11.85 4.09

## Mode Property Crime/Theft

##

## gang no yes

## Count 1434.00 304.00

## Percent 82.51 17.49

## Mode no

##

## gun no yes

## Count 1015.0 723.0

## Percent 58.4 41.6

## Mode no

##

## perkins no yes

## Count 1177.00 561.00

## Percent 67.72 32.28

## Mode noWhereas, for a specific variable, we can call univariate statistics as such…

## Description of structure(list(x = c(2.98, 6.68, 4.6, 1.32, 0.44, 3.44, 6.55, 2.74, 3.39, 0.57, 0.67, 4.44, 2.82, 2.29, 1.37, 1.51, 8.02, 9.67, 4.72, 8.21, 0.97, 1.24, 1.51, 0.5, 9.07, 4.54, 4.88, 1.5, 2.21, 2.98, 3.96, 4.04, 0.63, 4.45, 9.73, 0.11, 3.53, 3.13, 6.12, 3.52, 0.85, 1.19, 0.91, 4.32, 1.85, 4.37, 7.82, 3.8, 1.21, 9.81, 5.24, 8.44, 8.32, 5.55, 4.78, 0.64, 3.11, 4.49, 6.6, 8.49, 9.23, 5.38, 8.88, 0.47, 6.92, 3.84, 2.3, 6.23, 2.7, 1.64, 8.08, 5.72, 3.51, 7.19, 8.75, 8.38, 0.1, 5.33, 5.84, 5.36, 3.63, 3.09, 2.22, 7.96, 7.8, 4.59, 8.33, 6.02, 0.56, 1.49, 4.89, 2.35, 0.61, 6.23, 0.39, 6.46, 0.03, 8.23, 0.5, 3.39, 9.88, 1.12, 3.06, 4, 6.54, 6.66, 2.5, 2.39, 3.78, 1.78, 6.61, 3.07, 3.93, 9.46, 8.68, 4.56, 5.57, 4.68, 6.64, 6.92, 6.81, 7.48, 5.31, 0.32, 0.64, 9.56, 6.98, 9.29, 9.36, 1.4, 6.54, 1.46, 4.89, 6.89, 1.41, 3.35, 2.77, 1.48, 2.16, 4.58, 8.19, 8.14, 2.42, 6.08, 4.65, 5.17, 8.79, 7.86, 9.65, 0.67, 3.81, 1.53, 0.08, 8.19, 1.99, 3.14, 5.77, 6.86, 0.43, 5.61, 3.89, 4.72, 4.74, 6.98, 7.96, 0.42, 2.45, 6.92, 2.96, 5.06, 2.12, 3.69, 5.39, 0.2, 3.78, 0.38, 1.43, 7.29, 2.65, 6.85, 1.71, 0.71, 6.65, 1.21, 4.34, 9.03, 0.35, 5.3, 4.3, 0.09, 2.66, 6.14, 9.3, 5.32, 6.89, 1.79, 2.56, 2.11, 8.71, 6.94, 5.34, 8.25, 3.25, 1.54, 0.62, 6.7, 6.98, 6.22, 8.84, 0.8, 3.54, 1.45, 7.97, 9.04, 2.22, 3.75, 4.51, 3.78, 9.94, 9.08, 3.53, 6.53, 1.36, 1.07, 4.59, 5.23, 4.08, 9.44, 6.09, 5.15, 6.9, 6.93, 0.45, 9.79, 9.05, 4.42, 7.11, 9.95, 8, 5.92, 6.62, 8.57, 8.8, 1.74, 4.88, 9.18, 2.03, 5.52, 8.87, 1.9, 7.2, 5.92, 9.82, 5.38, 8.47, 5.42, 4.28, 0.74, 1.88, 5.57, 9.67, 2.55, 4.52, 4.55, 9.01, 9.95, 2.8, 4.06, 6.51, 3.67, 2.85, 5.61, 7.83, 2.13, 6.36, 2.22, 6.72, 6.76, 3.48, 0.85, 3.12, 2.64, 3.34, 0.44, 6.34, 9.75, 5.37, 2.33, 3.58, 3.99, 5.83, 3.64, 6.4, 6.93, 6.24, 7.94, 1.21, 2.01, 3.46, 2.14, 9.48, 1.5, 9.54, 3.97, 9.02, 2.91, 5.34, 2.14, 4.92, 6.14, 6.44, 9.31, 3.72, 1.9, 0.83, 5.7, 3.31, 2.65, 4.18, 1.54, 3.92, 9.9, 9.52, 9.49, 4.19, 1.51, 9.53, 1.68, 3.11, 4.16, 5.44, 4.7, 2.74, 3.43, 2.98, 6.78, 4.31, 9.28, 0.73, 4.77, 2.05, 0.02, 7.15, 7.47, 6.5, 2.34, 0.27, 2, 2.55, 2.05, 1.44, 5.56, 8.06, 2.58, 5.12, 1.84, 4.07, 9.85, 5.31, 4.43, 2.94, 9.14, 4.66, 7.67, 10, 0.77, 1.38, 0.76, 8.81, 8.49, 4.45, 7.85, 0.43, 5.55, 0.88, 2.73, 4.87, 2.21, 6.89, 2.08, 7.38, 6.88, 2.37, 0.12, 6.59, 1.83, 9.09, 6.98, 2.76, 4.4, 6.84, 6.59, 0.33, 8.18, 2.52, 5.85, 8.85, 3.23, 7.16, 7.79, 7.98, 2.42, 5.59, 4.32, 9.26, 2.74, 0.2, 6.55, 6.06, 7.67, 9.86, 8.46, 6.25, 2.65, 2.45, 5.98, 4.8, 1.68, 8.98, 7.46, 1.58, 9.98, 7.87, 0.18, 7.26, 3.85, 2.34, 3.32, 6.95, 1.34, 3.24, 7.62, 0.25, 1.2, 0.59, 2.88, 4.2, 3.79, 6.93, 8, 3.62, 7.45, 4.68, 9.5, 2.88, 2.76, 5.58, 8.2, 8.75, 0.7, 1.38, 4.54, 1.36, 7.94, 6.08, 6.23, 8.46, 5.69, 0.95, 1.46, 6.49, 5.76, 1.91, 0.17, 1.24, 3.38, 7.98, 5.42, 3.03, 8.78, 1.54, 0.57, 6.55, 7.25, 2.32, 9.41, 4.61, 3.12, 5.69, 1.7, 0.88, 5.9, 8.11, 0.04, 5.68, 2.8, 3.52, 4, 1.84, 4.55, 1.42, 6.45, 7.69, 1.47, 2.66, 3.3, 2.83, 4.47, 9.6, 2.08, 3.57, 7.1, 6.4, 3.97, 1.3, 2.66, 4.68, 4.62, 8.3, 0.93, 2.16, 0.5, 5.68, 1.81, 5.71, 9.47, 4.08, 8.66, 0.26, 2.95, 2.67, 6.45, 8.82, 6.78, 8.53, 9.03, 2.87, 6.49, 1.57, 6.9, 6.93, 0.29, 9.84, 0.42, 0.94, 2.98, 6.47, 8.77, 9.05, 9.52, 5.65, 1.44, 8.83, 5.9, 5.58, 7.13, 7.81, 5.09, 8.77, 8.81, 9.15, 7.08, 6.52, 3.56, 7.2, 6.36, 5.72, 6.66, 2.28, 8.86, 8.23, 3.64, 5.82, 6.18, 2.4, 0.76, 2.33, 9.22, 4.18, 4.06, 7.36, 3.42, 6.7, 4.41, 4.23, 6.11, 2.63, 0.09, 6.44, 5.26, 5.49, 4.06, 9.2, 6.02, 6.95, 6.38, 1.58, 7.74, 2.68, 6.87, 7.54, 2.2, 7.54, 1.97, 6.92, 2.51, 0.53, 5.09, 8.51, 1.51, 3.69, 4.99, 4.51, 2.81, 4.86, 8.43, 2.36, 2.49, 6.97, 5.38, 4.6, 4.66, 3.89, 4.55, 7.14, 7.14, 7.07, 4.86, 2.82, 7.25, 4.68, 4.59, 8.53, 8.68, 0.18, 6.71, 4.2, 8.05, 7.79, 4.89, 1.49, 2.52, 1.94, 6.99, 1.51, 6.57, 3.99, 1.36, 1.4, 0.25, 0.52, 8.09, 2.96, 6.46, 1.17, 9.72, 8.98, 6.25, 7.17, 3.09, 0.05, 3.59, 8.24, 6.86, 7.17, 4.81, 0.06, 0.09, 3.69, 0.98, 0.04, 5.54, 3.84, 1.93, 6.05, 6.52, 6.25, 1.45, 1.6, 4.08, 7.46, 0.29, 6.87, 6.02, 0.87, 7.24, 9.71, 6.43, 7.54, 6.06, 5.47, 5.45, 3.39, 8.91, 1.57, 2.71, 9.82, 5.14, 7.67, 3.37, 3.07, 2.08, 0.53, 0.39, 5.65, 6.91, 0.22, 6.65, 3.39, 4.83, 3.69, 0.69, 2.68, 5.9, 4.67, 9.38, 6.59, 0.95, 1.57, 4.12, 3.75, 1.92, 2.84, 2.89, 7.16, 4.09, 8.94, 3.89, 2.62, 3.3, 0.12, 3.47, 6.59, 0.23, 5.61, 3.81, 7.4, 8.54, 1.49, 2.75, 3.35, 3.02, 4.36, 5.07, 0.56, 4.75, 6.36, 2.2, 1.39, 9.82, 1.85, 5.73, 6.73, 7.06, 3.47, 4.81, 2.14, 3.01, 1.43, 3.75, 6.53, 4.49, 6.69, 5.32, 4.05, 4.29, 1.4, 1.34, 3.36, 2.1, 1.27, 5.68, 8.94, 6.63, 6.76, 4.45, 6.72, 9.06, 3.36, 3.12, 1.43, 6.75, 4.61, 7.01, 3.5, 7.29, 4.97, 6.08, 1.82, 6.33, 0.25, 6.78, 5.46, 2.86, 2.16, 6.74, 5.97, 6.22, 0.49, 4.09, 3.52, 5.75, 1.02, 1.61, 4.05, 5.64, 1.09, 7.46, 3.17, 7.56, 5, 5.67, 0.43, 3.93, 9.26, 4.06, 3.28, 0.72, 6.17, 7.09, 7.24, 4.7, 2.6, 0.78, 0.6, 5.54, 8.7, 2.07, 6.89, 7.4, 3.38, 9.16, 8.65, 1.7, 7.82, 6.7, 6.45, 0.77, 7.53, 0.22, 3.83, 5.53, 5.09, 6.12, 9.4, 8.85, 9.9, 6.2, 1.57, 3.21, 2.55, 2, 2.23, 1.68, 0.38, 0.52, 6.65, 4.31, 4.35, 1.95, 5.91, 1.35, 6.99, 2.98, 3.35, 6.05, 8.45, 2.5, 4.15, 4.67, 1.2, 4.58, 4.65, 5.89, 4.35, 0.14, 0.5, 1.27, 7.13, 1.46, 3.17, 1.6, 3.57, 5.81, 2.97, 6.55, 5.73, 9.69, 8.54, 9.79, 2.84, 9.06, 8.33, 3.24, 6.36, 9.49, 6.37, 7.13, 1.66, 4.03, 6.25, 6.49, 3.17, 3.14, 0.42, 1.31, 6.14, 4.67, 5.78, 0.19, 8.57, 2.74, 5.14, 9.54, 3.53, 9.04, 3.65, 6.12, 3.69, 10, 5.09, 2.53, 4.59, 8.58, 0.34, 0.6, 6.67, 1.77, 7.72, 5.18, 2.54, 3.81, 1.42, 3.9, 1.88, 3.54, 6.37, 2.31, 9.55, 2.79, 7.13, 4.52, 7.37, 4.8, 7.97, 6.68, 6.07, 0.4, 2.84, 6.49, 6.03, 8.26, 3.76, 3.67, 6.98, 9.35, 6.29, 1.72, 0.41, 7.59, 3.01, 2.03, 0.96, 2.11, 5.04, 1.07, 0.22, 2.01, 6.22, 0.36, 4.07, 2.63, 2.38, 9.36, 5.62, 2.17, 5.42, 3.16, 8.82, 8.53, 2.44, 9.82, 2.63, 5.48, 4.52, 5.96, 0.22, 0.08, 0.26, 2.5, 2.71, 2.8, 6.79, 7.75, 2.44, 7.65, 5.97, 3.73, 2.96, 3.9, 1.5, 6.14, 7.87, 1.3, 9.23, 2.64, 5.25, 2.73, 4.09, 1.89, 9.58, 6.13, 5.33, 4.56, 3.1, 8.54, 5.2, 6.94, 5.65, 0.35, 5.07, 1.07, 2.85, 1.3, 0.05, 9.01, 1.2, 6.1, 0.45, 1.07, 2.72, 0.7, 5.51, 0.3, 4.22, 3.78, 1.97, 8.51, 1.22, 6.68, 3.96, 0.26, 0.97, 3.69, 2.37, 4.58, 0.1, 0.47, 9.83, 4.72, 2.56, 1.3, 5.87, 6.17, 4.03, 4.53, 1.02, 5.64, 8.12, 0.94, 0.82, 6.21, 1.64, 8.69, 0.07, 2.69, 4.52, 6.53, 2.14, 1.26, 1.87, 0.11, 3.95, 0.4, 5.13, 4.22, 9.08, 2.16, 6.28, 6.35, 5.52, 5.88, 6.39, 1.81, 5.81, 1.73, 2.82, 0.38, 8.27, 3.23, 1.29, 5.12, 6.14, 7.83, 8.95, 1.95, 5.33, 5.94, 2.28, 8.12, 0.64, 2.25, 3.76, 1.89, 6.04, 2.11, 2.89, 6.18, 2.49, 7.8, 0.28, 5.02, 1.4, 0.49, 2.25, 8.49, 6.63, 4.24, 6.48, 5.08, 0.73, 9.19, 9.29, 6.64, 5.27, 0.29, 1.13, 5.11, 0.02, 6.59, 2.7, 6.03, 6.75, 0.24, 5.48, 4.93, 5.12, 5.67, 5.13, 9.27, 2.6, 8.8, 8.29, 5.36, 2.28, 1.36, 4.42, 3.77, 2.12, 2.12, 0.89, 2.87, 7.26, 7.2, 6.7, 0.02, 0.87, 4.62, 2.78, 3.92, 1.21, 3.08, 0.38, 1.28, 1.9, 3.89, 9.84, 6.68, 0.35, 5.41, 2.34, 0.8, 4.76, 4.03, 7.39, 1.42, 6.44, 0.16, 3.98, 2.85, 3.93, 4, 3.02, 8.35, 5.91, 5.09, 1.14, 1, 6.04, 0.97, 0.52, 6.77, 0.34, 3.22, 9.24, 1.5, 3.18, 7.69, 6.97, 0.22, 3.61, 7.61, 8.84, 6.25, 8.03, 6.32, 3.04, 7.64, 4.59, 7.34, 5.9, 8.26, 1.22, 4.33, 9.34, 3.61, 6.29, 6.21, 1.9, 4.8, 2.95, 5.66, 1.13, 1.95, 3.05, 9.55, 6.57, 6.89, 2.89, 2.11, 2.19, 6.07, 6.58, 7.55, 3.72, 4.82, 2.25, 5.82, 2.83, 3.11, 4.77, 1.62, 9.16, 6.01, 6.71, 0.48, 3.72, 8.13, 5.05, 5.74, 1.45, 1.49, 5.24, 3.55, 3.82, 4.53, 4.67, 3.7, 9.56, 1.65, 3.22, 6.36, 0.58, 7.38, 5.77, 8.97, 5.84, 5.63, 1.26, 7.72, 1.53, 4.09, 7.66, 2.76, 8.63, 4.75, 5.86, 9.85, 5.59, 9.16, 4.35, 9.45, 1.16, 8.8, 6.89, 2.82, 7.21, 1.75, 1.46, 3.03, 1.82, 5.73, 4.84, 0.01, 0.01, 2.12, 8.98, 0.44, 8.01, 6.71, 9.25, 2.75, 6.7, 1.34, 1.4, 1.7, 1.34, 8.46, 3.89, 0.46, 3.09, 1.54, 0.55, 6.51, 5.62, 7.32, 2.12, 2.19, 2.81, 1.21, 4.07, 1.8, 5.87, 4.68, 6.72, 3.31, 7.03, 1.92, 9.82, 6.57, 4.08, 4.44, 9.03, 0.61, 2.48, 5.31, 9.26, 8.86, 0.37, 0.82, 7.28, 5.54, 6.85, 8.28, 8.99, 8.93, 1.76, 1.67, 8.9, 3.78, 3.87, 2.84, 3.44, 4.07, 6.7, 8.65, 9.56, 4.09, 2.76, 1.53, 1.42, 9.1, 1.02, 3.77, 2.71, 9.83, 5.37, 5.48, 8.08, 4.96, 3.78, 6.11, 6.95, 9.49, 1.35, 6.22, 9.64, 0.9, 6.77, 9.45, 0.68, 0.73, 2.47, 6.65, 7.18, 0.92, 1.57, 8.48, 1.61, 9.16, 2.26, 3.04, 9.84, 7.21, 7.18, 3.18, 5.98, 1.42, 9.7, 0.45, 2.69, 0.86, 4.01, 4.71, 2.23, 4.86, 2.4, 7.99, 7.1, 9.69, 2.26, 2.66, 3.8, 2.17, 3.94, 2.79, 1.83, 1.51, 0.96, 0.24, 5.01, 4.33, 2.16, 4.81, 6.44, 1.17, 4.68, 4.41, 3.76, 1.74, 7.1, 9.66, 3.95, 9.58, 8.87, 0.35, 3.29, 2.47, 9.72, 1.72, 1.56, 1.71, 3.72, 1.52, 6.56, 0.32, 0.82, 2.4, 4.47, 1.46, 3.22, 6.09, 8.7, 3.19, 3.85, 1, 4.8, 6.53, 7.06, 1.44, 5.05, 7.26, 6.28, 2.15, 4.48, 0.96, 6.55, 9.4, 7.96, 0.47, 9.57, 2.85, 5.35, 1.08, 3.12, 6.89, 0.82, 0.73, 6.89, 1.95, 5.16, 6.6, 5.05, 4.15, 8.63, 0.18, 2.62, 2.21, 9.21, 1.23, 8.26, 6.14, 0.89, 9.45, 5.7, 3.27, 8.83, 8.45, 4.51, 0.69, 1.82, 0.15, 6.88, 3.88, 2.75, 7.08, 9.45, 6.93, 1.5, 0.3, 9.91, 5.75, 2.86, 1.83, 0.57, 2.11, 6.8, 2.64, 0.16, 9.98, 0.29, 0.58, 6.09, 6.55, 8.11, 3.61, 8.3, 5.98, 3.44, 4.49, 6.68, 3, 6.69, 0.77, 2.56, 6.07, 1.57, 6.67, 6.39, 5.67, 9.21, 4.4, 4.69, 0.06, 2.04, 3.71, 4.04, 1.53, 7.89, 1.64, 8.03, 1.02, 0.23, 6.6, 5.55, 1.6, 2.02, 2.19, 1.64, 2.55, 1.44, 6.48, 3.43, 3.94, 5.24, 7.25, 9.03, 8.6, 3.41, 2.17, 4.62, 0.62, 2.41, 6.47, 0.27, 7.51, 7.23, 7.32, 1.9, 0.96, 4.56, 2.3, 0.15, 9.35, 7.36, 7.66, 6.87, 6.95, 6.68, 3.99, 9.97, 0.61, 5.63, 0.39, 1.92, 1.15, 3.16, 0.98, 1.42, 7.84, 4.66, 4.09, 5.02, 0.12, 1.23, 1.95, 5.2, 1.41, 5.5, 2.8, 4.63, 7.99, 6.71, 5.04, 6.4, 1.54, 4.4, 2.72, 5.97, 3.28, 3.64, 6.57, 2.64, 2.77, 0.71, 5.81, 6.52, 6.76, 8.98, 1.45, 2.6, 0.99, 2.86, 2.99, 8.67, 0.81, 0.71, 4.87, 0.51, 2.05, 7.67, 2.13, 5.68, 0.45, 7.38, 4.46, 4.86, 0.85, 3.66, 5.47, 2.91, 0.73, 9.25, 3.33, 9.52, 3.43, 1.92, 1.72, 8.63, 1.56, 2.94, 6.73, 1.36, 0.45, 1.36, 9.27, 1.42, 6.32, 6.16, 5.87, 0.67, 9.59, 1.32, 1, 0.5, 8.46, 4.73, 1.63, 3.91, 1.84, 4.69, 5.47, 4.84, 8.01, 3.16, 4.34, 5.59, 0.78, 2.2, 9.45, 4.94, 5.55, 3.04, 0.21, 5.43, 6.37, 7.01, 0.98, 4.67, 3.4, 2.34, 4.65, 3.45, 9.92, 2.97, 4.87, 6.61, 9.73, 6.57, 1.15, 2.07, 4.73, 2.08, 4.15, 2.06, 3.45, 2.71, 2.95, 6.98, 1.45, 4.75, 1.51, 7.83, 0.38, 6.28, 1.65, 3.15, 2.73, 4.16, 6.93, 2.53, 9.67, 1.03, 1.1, 3.51, 1.38, 3.16, 0.36, 0.44, 0.3, 1.78, 8.1, 1.4, 3.48, 4.42, 9.38, 3.43, 5.62)), row.names = c(NA, -1738L), class = "data.frame")##

## Numeric

## mean median var sd valid.n

## x 4.51 4.35 7.75 2.78 1738You could also call the same information using the

univ.desc function within the vannstats

package, as:

## n mean sd variance median min max skewness kurtosis se

## 1 1738 4.506024 2.784066 7.751021 4.35 0.01 10 0.2015323 -1.076598 0.06678125In addition, we can call univariate statistics for a variable but

broken out by groups/categories of another variable. Note, this is the

first step towards bivarate statistics (looking at the relationship

between two variables). We do this by using the describeBy function, where we

list the main variable first, and the grouping/category variable second…

as such…

##

## Descriptive statistics by group

## group: asian

## vars n mean sd median trimmed mad min max range skew kurtosis se

## X1 1 70 3.14 2.54 2.36 2.77 1.44 0.04 9.22 9.18 1.22 0.3 0.3

## -------------------------------------------------------------------------------

## group: black

## vars n mean sd median trimmed mad min max range skew kurtosis se

## X1 1 435 4.82 2.82 4.74 4.8 3.53 0.02 10 9.98 0.03 -1.22 0.14

## -------------------------------------------------------------------------------

## group: latine

## vars n mean sd median trimmed mad min max range skew kurtosis se

## X1 1 817 4.82 2.81 4.96 4.8 3.31 0.01 10 9.99 0.01 -1.11 0.1

## -------------------------------------------------------------------------------

## group: other

## vars n mean sd median trimmed mad min max range skew kurtosis se

## X1 1 34 3.69 2.64 3.44 3.45 2.71 0.01 9.98 9.97 0.68 -0.22 0.45

## -------------------------------------------------------------------------------

## group: white

## vars n mean sd median trimmed mad min max range skew kurtosis se

## X1 1 382 3.8 2.53 3.42 3.58 2.7 0.03 9.98 9.95 0.61 -0.36 0.13Similarly, you can use the univ.desc function to call

the same information (as describeBy) by adding your

grouping variable as an additional item, as:

## race n mean sd variance median min max skewness kurtosis se

## 1 asian 70 3.139286 2.542763 6.465644 2.365 0.04 9.22 1.221610349 0.3017411 0.30391833

## 2 black 435 4.821770 2.818715 7.945151 4.740 0.02 10.00 0.034226079 -1.2242178 0.13514702

## 3 latine 817 4.820012 2.809088 7.890977 4.960 0.01 10.00 0.009597444 -1.1060331 0.09827756

## 4 other 34 3.691765 2.639655 6.967779 3.440 0.01 9.98 0.679724152 -0.2222203 0.45269710

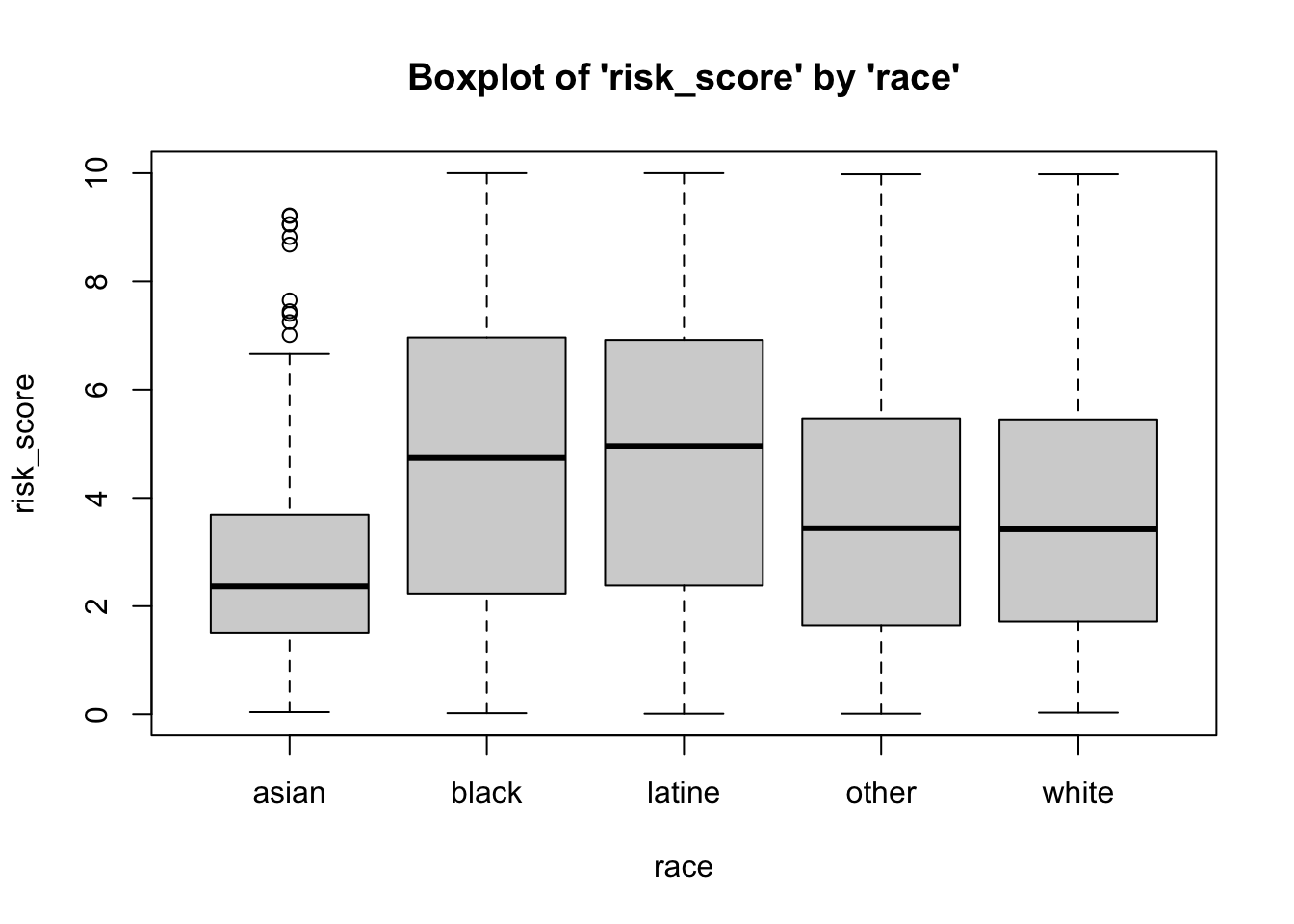

## 5 white 382 3.797853 2.526206 6.381717 3.420 0.03 9.98 0.607126209 -0.3629455 0.12925194Above, we can see that the mean risk score differs by the race of the defendant (e.g. black and latine individuals have, on average, higher risk scores).

Skewness and Kurtosis

Skewness is the measure of how close or far a distribution is from symmetry (the normal curve). While it summarizes clustering of scores along the X-axis, with regard to the position of the mode, median, and mean, skewness is also concerned with length/width of the tails of the distribution, relative to one another.

Skewness ranges from \(-\infty\) to \(\infty\). The sign indicates the type of skew, with \(-\) indicating negative skewness, \(+\) indicating positive skewness, and 0 indicating no skew… (AKA symmetry, AKA the normal curve). The cutoffs for skewness are as follows:

High: \(\geq |1|\)

Moderate: \(|1| \geq x \geq |.5|\)

Low: \(|.5| \geq x \geq |0|\)

Kurtosis is sometimes referred to as a measure of the peakedness of the distribution, and how different the distribution is from mesokurtic (e.g. middle kurtosis, or the normal curve). Statisticians have argued that kurtosis is, more appropriately, a measure of the height/thickness of the tails of the distribution.

Statisticians have developed a kurtosis measure that represents excess kurtosis beyond the normal curve (although typical kurtosis ranges from 1 to \(+ \infty\)). This excess kurtosis measure ranges from \(-2\) to \(+ \infty\). Using this metric, negative values represent platykurtic distributions and positive values indicate leptokurtic distributions. Distributions close to a kurtosis value of 0 are considered mesokurtic. We use cutoffs to indicate types of kurtosis, as follows…

Platykurtic: \(-2 \leq x \lt 0\); or \(x \lt 0\)

Mesokurtic: \(x \approx 0\)

Leptokurtic: \(+ \infty \geq x \gt 0\); or \(x \gt 0\)

Calling Specific Univariate Statistics

Beyond using the describe function, you can call

singular desired univariate statistics. Here, we’ll ask for a specific

univariate statistic, one at a time, for the risk_score variable.

Below, we’ve added the option for , na.rm=T (alternatively, , na.rm=TRUE), meaning that if

data or observations are missing/NA for the variables we’re working

with, we still want R to calculate the statistic for the non-missing

cases by removing those missing cases (NAs), select TRUE.

## [1] 4.506024## [1] 4.35## [1] 2.784066## [1] 0.01## [1] 10## [1] 0.01 10.00## [1] 9.99Standardized Scores (Z-Scores) and Interval Estimates around Means (CI)

Z-Score

Recall that Z-scores are standardized scores – how close or far an observation’s score is from the mean, in standard deviation units. These are relative scores because the standard deviation (as well as the mean) incorporates information from all other observations.

The calculation for Z-scores is:

\(Z = \frac{(X - \mu)}{\sigma}\)

But, the above calculation relies on population parameters \(\mu\), (the population mean) and \(\sigma\), (the population standard deviation), which we often do not have information on. Instead, the calculation, for each observation’s Z-score, is:

\(Z_{i} = \frac{(X_{i} - \bar{X})}{SD}\)

where…

- \(X_{i}\) is the raw score for a

given observation

- \(\bar{X}\) is the mean for all

observations

- \(SD\) is the standard deviation

for all observations

For example, if we wanted to calculate a Z-score for an individual who received a risk score of 5, relative to all other defendants in the Defendants2025 data set, we would use the formula:

\(Z_{Individual} = \frac{(5 - \bar{X}_{risk\_score})}{SD_{risk\_score}}\)

Luckily, I’ve created a Z-score calculation function, z.calc(), for calculating a

z-score for a value, given the mean and standard deviation for a

variable within a data frame (or a list of values).

- the

data frame(or avalues list) variable namefor the variable of interestraw score

as such…

## Raw Score Mean Z Score

## 5.0000000 4.5060242 0.1774297This indicates that the risk score for the individual is .177 standard deviation units above the mean.

Confidence Intervals

Recall that Z-scores are standardized scores – how close or far an observation’s score is from the mean, in standard deviation units. These are relative scores because the standard deviation (as well as the mean) incorporates information from all other observations.

The calculation for confidence intervals is:

\(CI = \mu \pm Z {\sigma}\)

…or, more appropriately, the calculation for a given confidence interval, based on a given confidence level (CL) is:

\(CI_{CL} = \mu \pm Z_{CL}{\sigma}\)

where…

- \(CL\) represents the Confidence

Level you’re interested in using (e.g. 95%, 99%, 99.9%, etc.)

- \(Z_{CL}\) represents the Z-score

associated with that Confidence Level you’re using (e.g. 95%, 99%,

99.9%, etc.)

For example, for the 99.9% CI, we would have an associated Z-score (\(Z_{CL}\)) of \(Z_{99.9} = 3.29\), such that, the CI calculation would be:

\(CI_{99.9} = \mu \pm 3.29{\sigma}\)

However, because the above formula relies on \(\sigma\), which is an unknown population parameter – the standard deviation of a variable from the population. Our best guess of that standard deviation population parameter is the standard deviation statistic from our sample, or \(SD\), but our sample standard deviation is based on fewer cases than the the standard deviation from the population . As such, we need to adjust the size of \(SD\) based on our sample size, creating a new value we can plug in in place of \(\sigma\), which is called Standard Error of the Mean: \(SE = \frac{SD}{\sqrt N}\). Moreover, because we are relying on data from a sample, we also need to rely on sample statistics, rather than population parameters \(\bar{X}\) Thus, our new confidence interval calculation becomes:

\(CI_{CL} = \bar{X} \pm Z_{CL}{\frac{SD}{\sqrt N}}\)

or….

\(CI_{CL} = \bar{X} \pm Z_{CL}{SE}\)

Below, I’ve added a CI calculation function, ci.calc(), for calculating a

confidence interval, for a given variable within a data frame (or a list

of values), for a given confidence level.

- the

data frame(or avalues list) variable namefor the variable of interestconfidence level

as such…

## Mean CI lower CI upper Std. Error

## 4.50602417 4.37513291 4.63691542 0.06678125Above, we see the mean for the risk_score variable

(within the data1 data frame), the lower and upper bounds

for the 95 percent confidence level, and the standard error.

Beyond this, we could read in a values list or a variable within a

data frame using the dollar sign operator. However, when doing this, you

should specify when you’re reading in the confidence level. For example,

if we wanted to calculate the 99 percent confidence interval for the

age variable from the data1 data frame, then

you could also run it as such:

## Mean CI lower CI upper Std. Error

## 35.3935558 34.6917239 36.0953877 0.2724503Assessing Normality with Visualizations

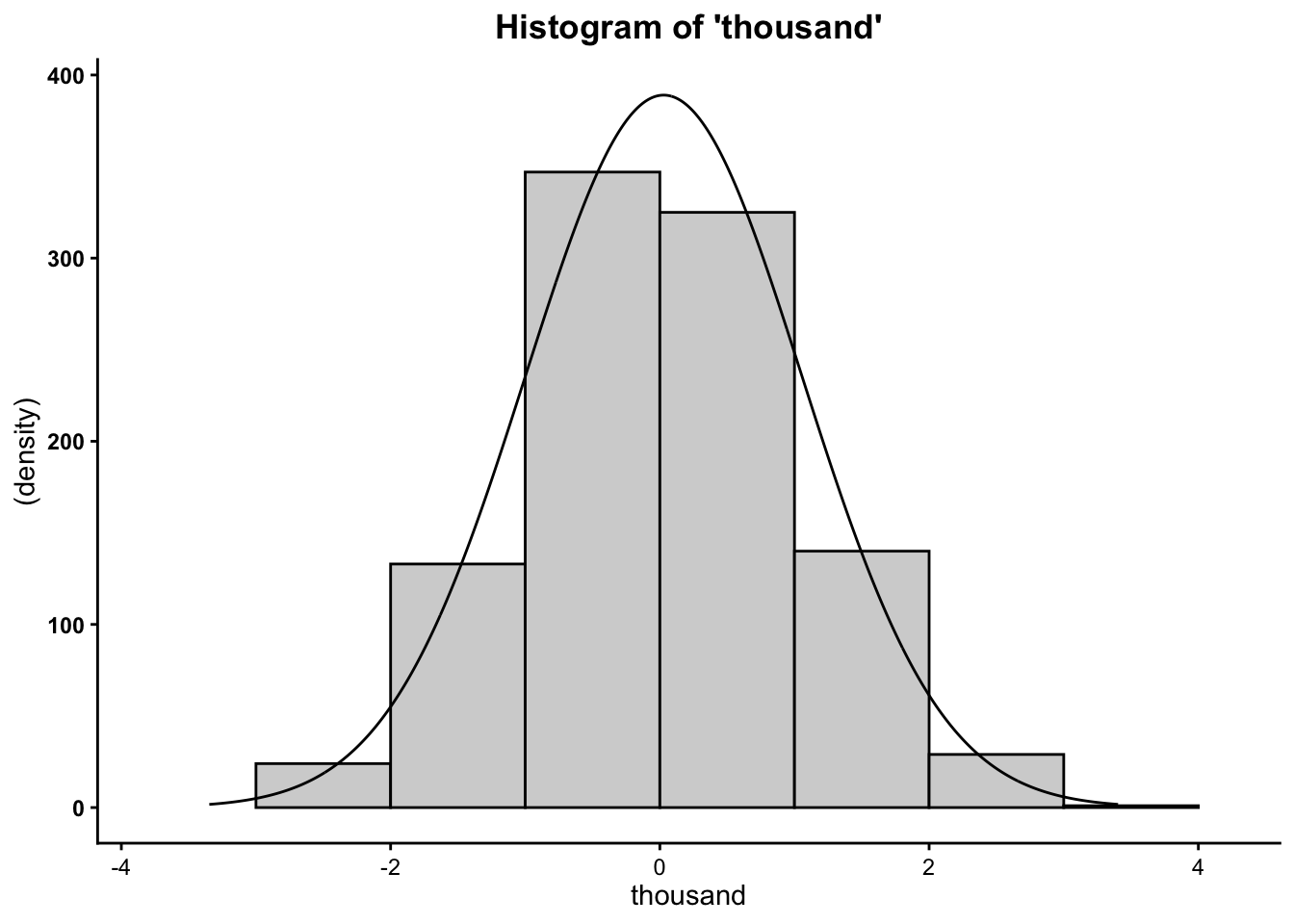

Histograms

In addition, you can create a visual representation (plot) of

univariate data using a histogram. For quickly plotting the histogram of

one variable, and to overlay a normal curve on our histogram, we can use

the hst function from the

vannstats package.

The hst function for

plotting one variable (e.g. not broken out by categories of another

variable) takes two arguments:

- the

data set name variable namefor the variable of interest

as such…

However, as we begin to move into analyzing bivariate relationships, we may find it necessary to visualize histograms by breaking them out by levels or categories of different variables.

To plot the histogram for risk score broken out by race use the same

hst function, from the

vannstats package, and simply add a third (and even up to a

fourth argument):

- the

data set name variable namefor the variable of interest(first) grouping variable name(second) grouping variable name

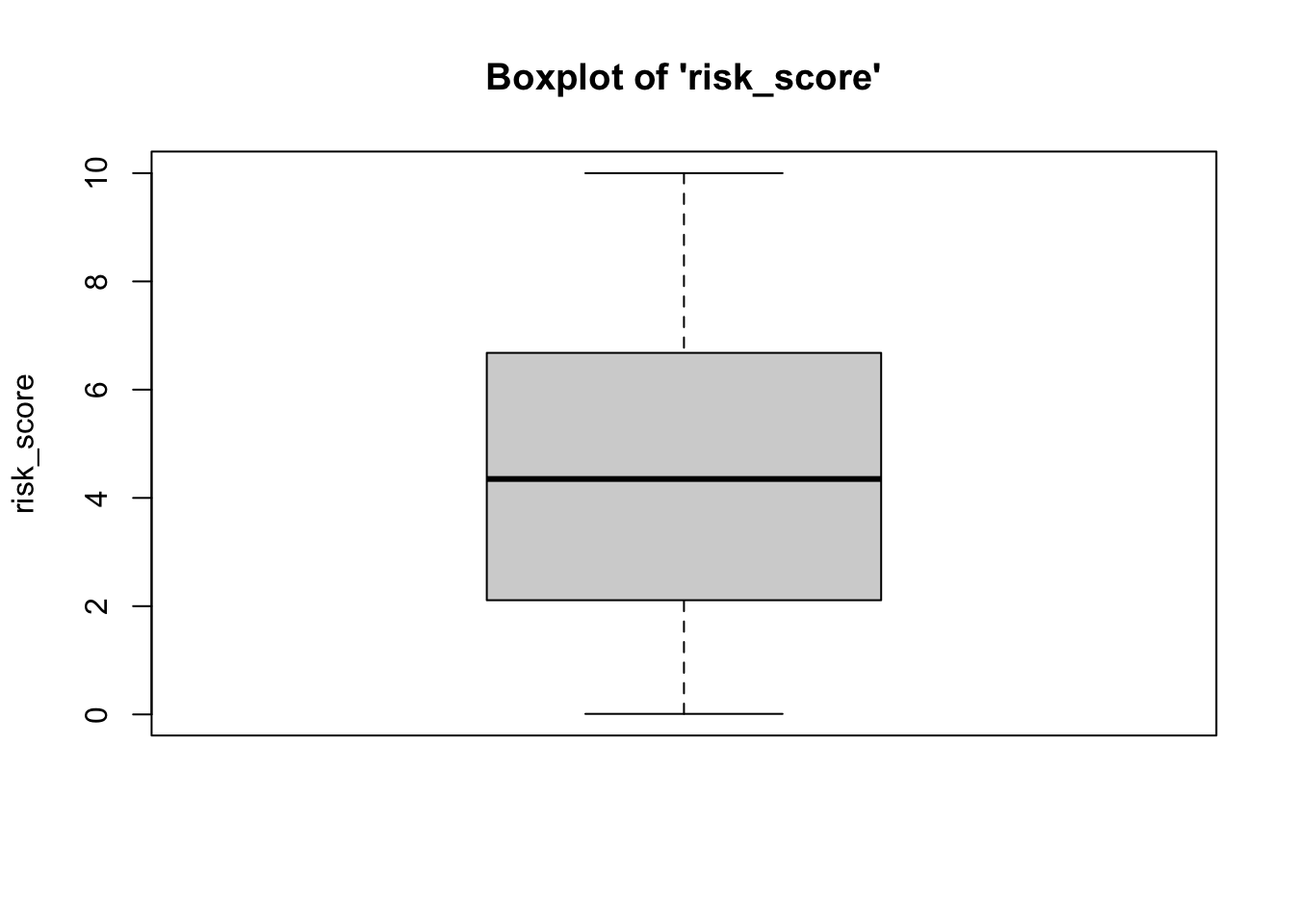

Boxplots (Box-and-Whisker Plots)

Boxplots also provide a visual representation of the normality of a distribution. The boxplot has a box, a line through the box, two whiskers on either end of the box, and sometimes dots/points outside the whiskers. Below, we get a sense of what each part of the boxplot represents…

- Bottom (or left end) whisker represents a point that is less than or equal to the calculation: 1.5x the size of the interquartile range (IQR). If there is an actual data point at that value, then the bottom whisker represents that point. However, if there is not an actual data point there, the bottom whisker is pulled inward to fall at the closest but less extreme data point.

- Bottom (or left end) of the box represents the first quartile (the 25th percentile case)

- Middle line (or dot) inside the box represents the median, also known as the second quartile (the 50th percentile case)

- Top (or right end) of the box represents the third quartile (the 75th percentile case)

- Top (or right end) whisker represents a point that

is less than or equal to than the calculation: 1.5x the size of

the interquartile range (IQR). If there is an actual data point at that

value, then the top whisker represents that point. However, if there is

not an actual data point there, the top whisker is pulled

inward to fall at the closest but less extreme data point.

of the whisker represents the maximum score for that

variable’s distribution, or, more appropriately, a distance above the

mean that is 1.5x the size of the interquartile range (IQR).

- Outside dots represent outliers - extreme high or extreme low values for that variable.

To determine whether or not a variable is normally-distributed using the box-and-whisker plot, generally, we want to see that there is some distance between the box and the end of the whiskers, that the box isn’t pushed too close to either whisker, that the median line (dot) is near the center of the box, and that there aren’t many outliers (dots) on the outside of the whiskers.

To plot a boxplot, we use the box function, from the

vannstats package. The function takes two arguments, if you

do not want to break it out by values of another variable:

- the

data set name variable namefor the variable of interest

Further, this function takes a maximum of four arguments:

- the

data set name variable namefor the variable of interest(first) grouping variable name(second) grouping variable name

To break the above boxplot out by categories of race, we can do the following…

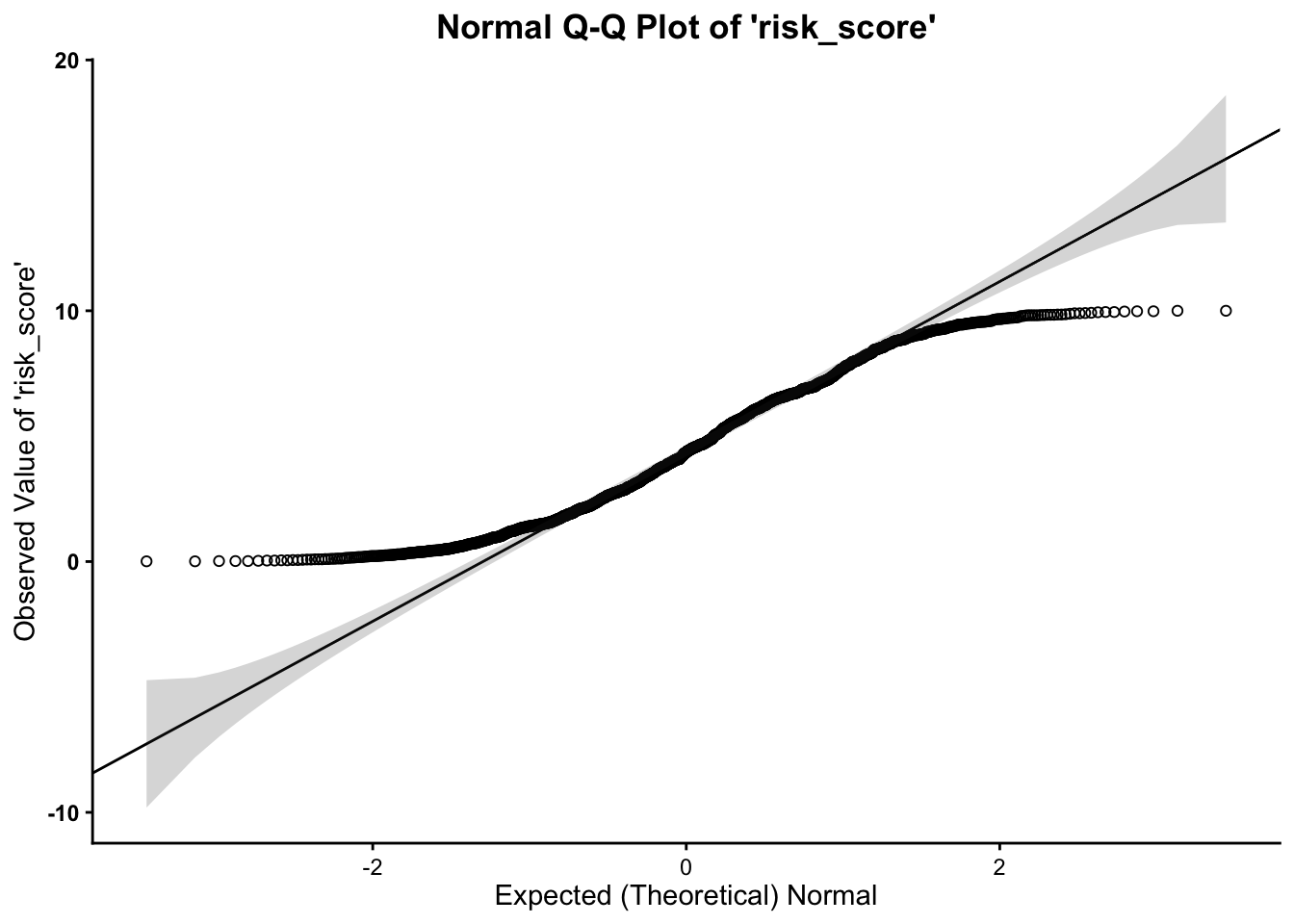

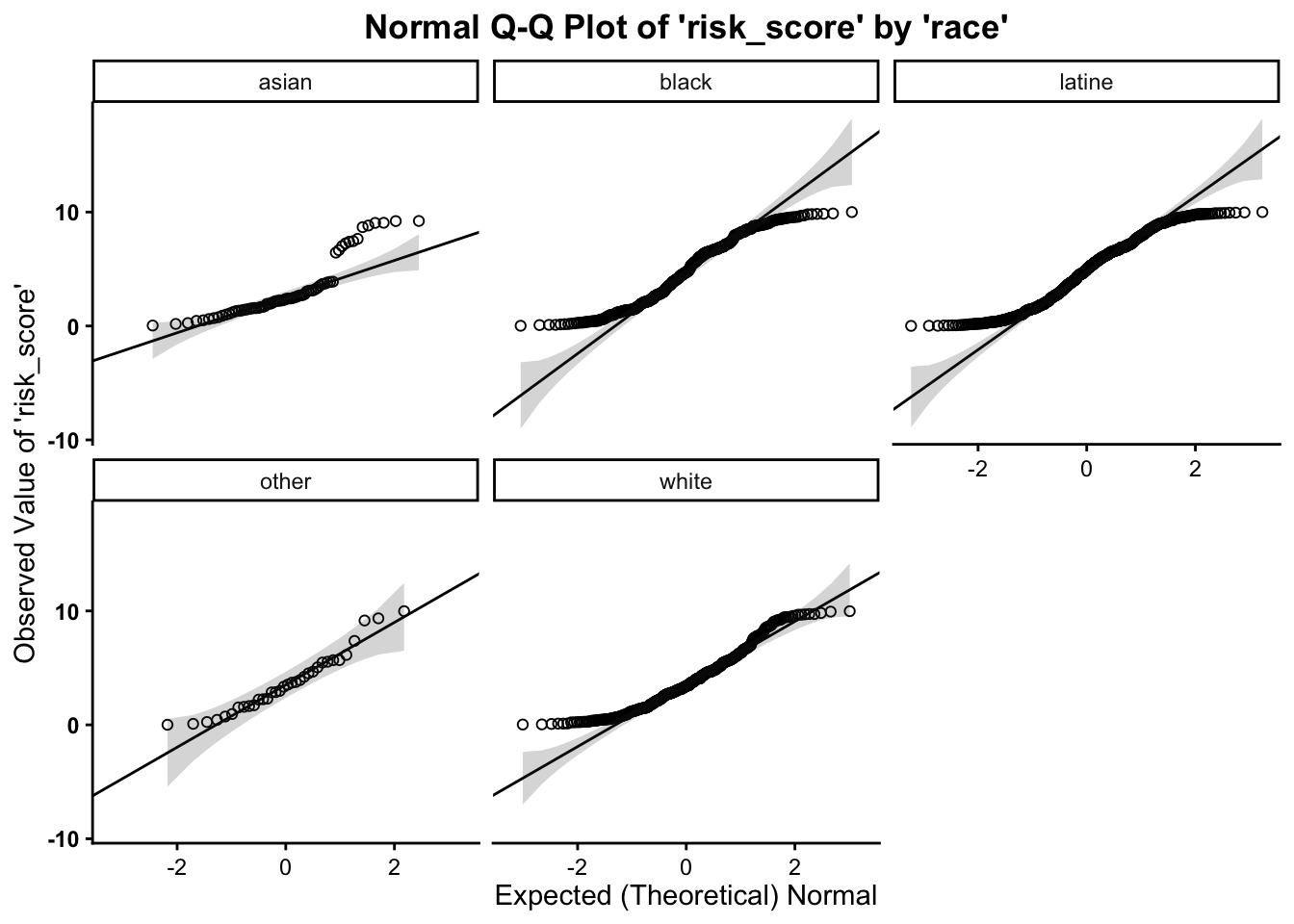

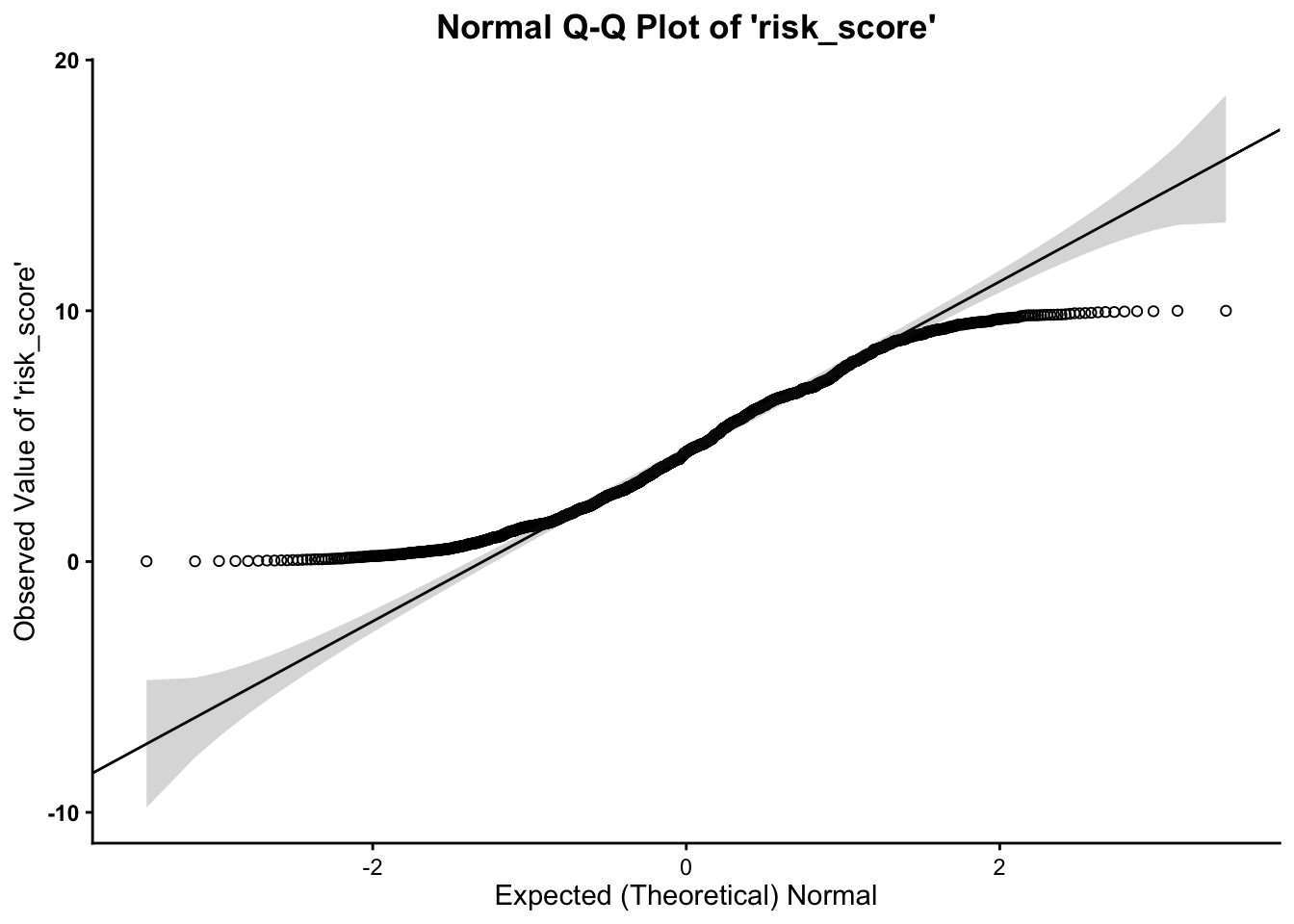

Normal Q-Q (Quantile-Quantile) Plots

Much as in the above, we want to assess whether or not our variable follows the normal distribution. As such, the quantile-quantile plot is a visual tool to help us figure out if the empirical distribution of our variable fits (or rather, comes from) a theoretical normal distribution.

We fit a plot of our data/variable (usually on the Y-axis) against ``theoretical data’’ that should occur if the data came from a normal distribution (e.g. Expected Normal on the X-axis). If our data actually fit a normal curve, then the dots on the plot should follow a straight line, or be reasonably close to the line plotted.

Below, we can assess normality to determine whether our variable

follows a normal distribution, using the qq function, from the

vannstats package. The function takes two arguments, if you

do not want to break it out by values of another variable:

- the

data set name variable namefor the variable of interest

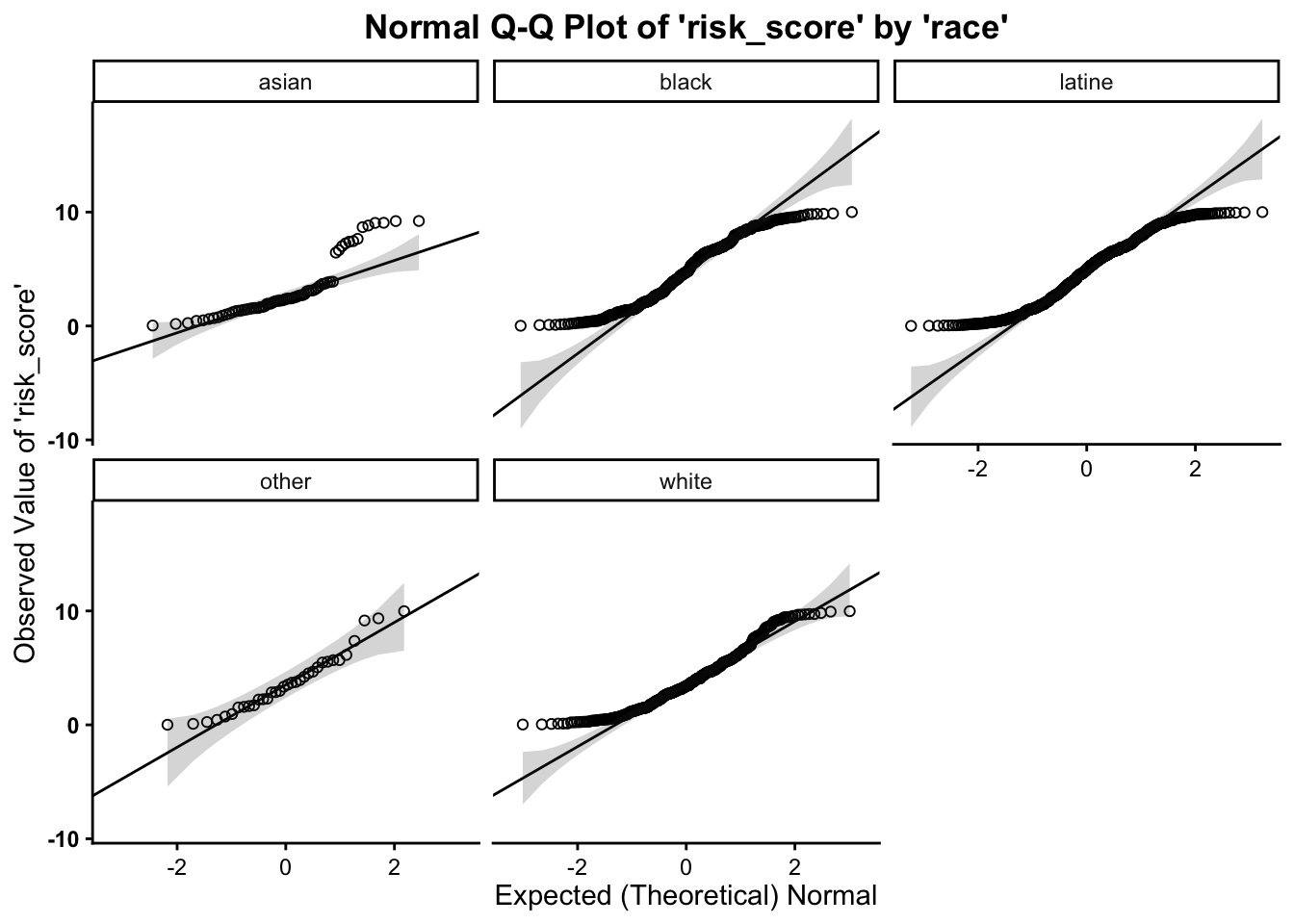

This function also takes a maximum of four arguments:

- the

data set name variable namefor the variable of interest(first) grouping variable name(second) grouping variable name

As such, we can break this plot out by another grouping variable:

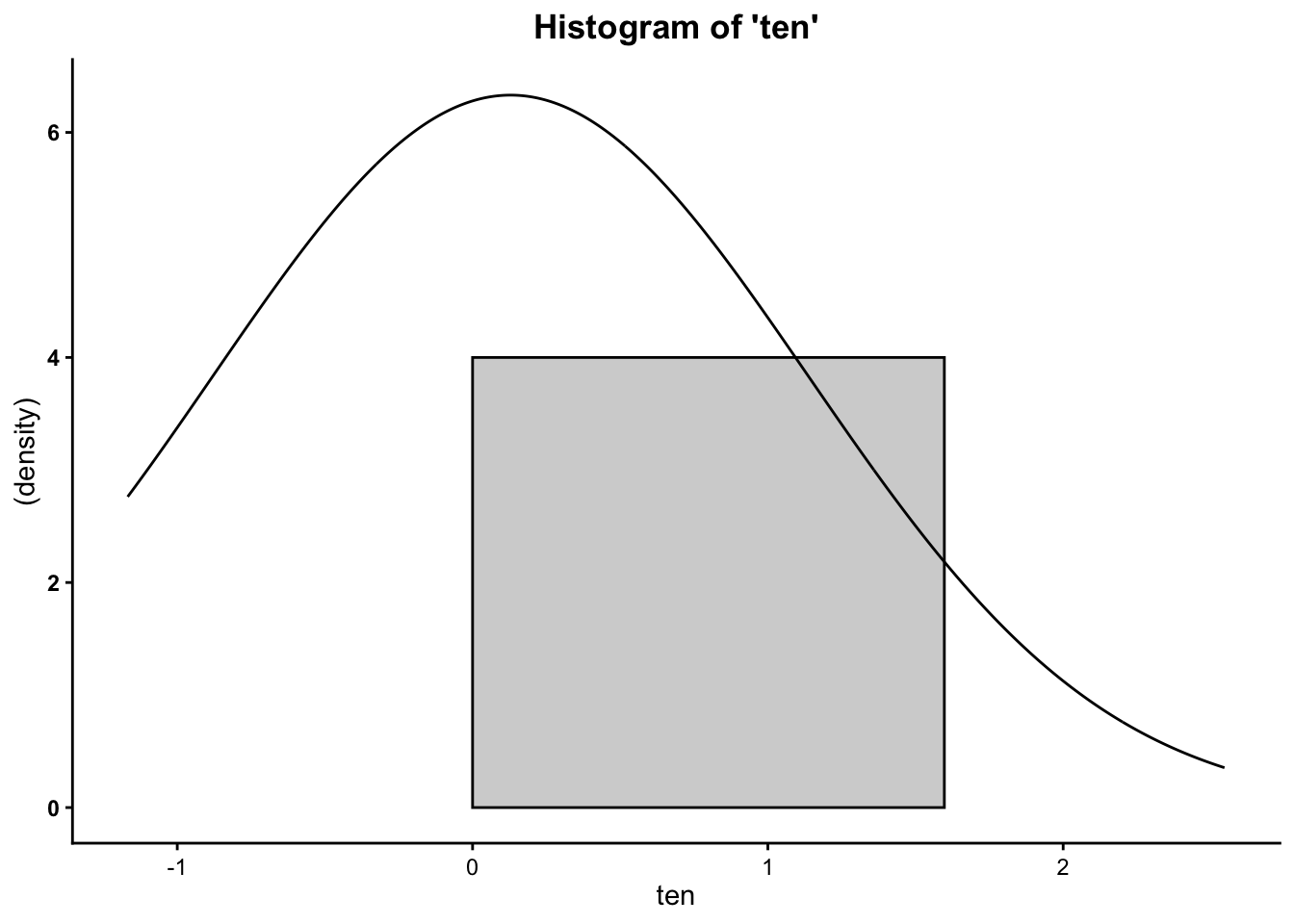

Working with Randomly Generated, Normally-Distributed Data

R also has a number of functions that work to generate random data.

To create random, normally-distributed data, use the rnorm function, which takes a

maximum of three arguments. It should look something like this rnorm(100,0,1), where the first

number (here, 100)

represents the number of cases or data points you want in your random

normally-distributed data. The second argument/number (here 0) is the mean that you want your

data to have. The third number/argument (here 1) is the standard deviation that

you want your data to have.

Note that the rnorm

function takes a maximum of three arguments – and it takes a minimum of

one argument (the number of cases/data points). The default settings for

the rnorm function is mean

of 0 and a standard deviation of 1. This means that rnorm(100) and rnorm(100,0,1) will output

similar means and standard deviations. Similar, not the exact same,

because these data are randomly generated, so the values of the

data points will vary a bunch but still have a mean of 0 and standard

deviation of 1.

Obviously, you can alter the number of cases involved.

## [1] 0.04970809 -0.54423814 -1.33691356 2.37038023 -0.38415600 -0.21507511 -0.19076261 1.27371439

## [9] 1.35031832 -1.21091518or…

## [1] -0.785400661 -0.106263676 -0.076022175 -0.859906717 -0.894520185 0.424001728 1.359614511

## [8] -1.196792974 1.742574333 -0.010260233 1.368851898 -0.811142497 0.683032060 -1.338555652

## [15] 0.111611695 -0.712137801 -0.255970887 0.602598748 -0.498271330 2.438080130 0.020948223

## [22] -0.872976076 0.139184298 1.391723571 -1.179853700 0.673739482 0.482169502 -0.176129497

## [29] -1.025585416 0.492826118 1.531753324 -1.518244333 2.711649620 -0.325018121 -0.016591231

## [36] -0.802228730 -1.280137749 -0.575164609 -1.017959465 1.119966673 0.979276706 0.530695605

## [43] 1.163463432 0.878169990 0.345802664 -0.124127309 -0.083629530 -0.713872637 0.738675368

## [50] 0.666532256 0.922664269 -0.494126846 0.415625392 0.635368894 1.051667359 -1.104563473

## [57] 0.566344563 1.677746533 2.020726431 0.741947261 -0.354818603 2.082252262 0.415020166

## [64] 0.008843922 -1.277809056 -0.254126768 0.351760959 1.844591232 -0.478299888 -0.065318369

## [71] 0.455855126 -1.338145742 -0.608300773 -1.943647332 -0.186509537 0.211048291 -0.370803501

## [78] -0.045747799 -0.387643982 0.145934651 0.811227296 -0.406236970 -1.260064301 0.533370632

## [85] 1.314120167 0.012219507 -2.063037723 -0.116605802 -0.421916581 -1.422236605 1.286468396

## [92] -0.740294980 0.652953157 -0.223703335 -0.595482633 0.652088622 -0.350663448 -1.436323245

## [99] -0.587249202 0.021717734You can also use the assignment operator <- to assign the values of the

rnorm function to an

object:

Then you can run univariate statistics on those data, and even create a histogram for the data:

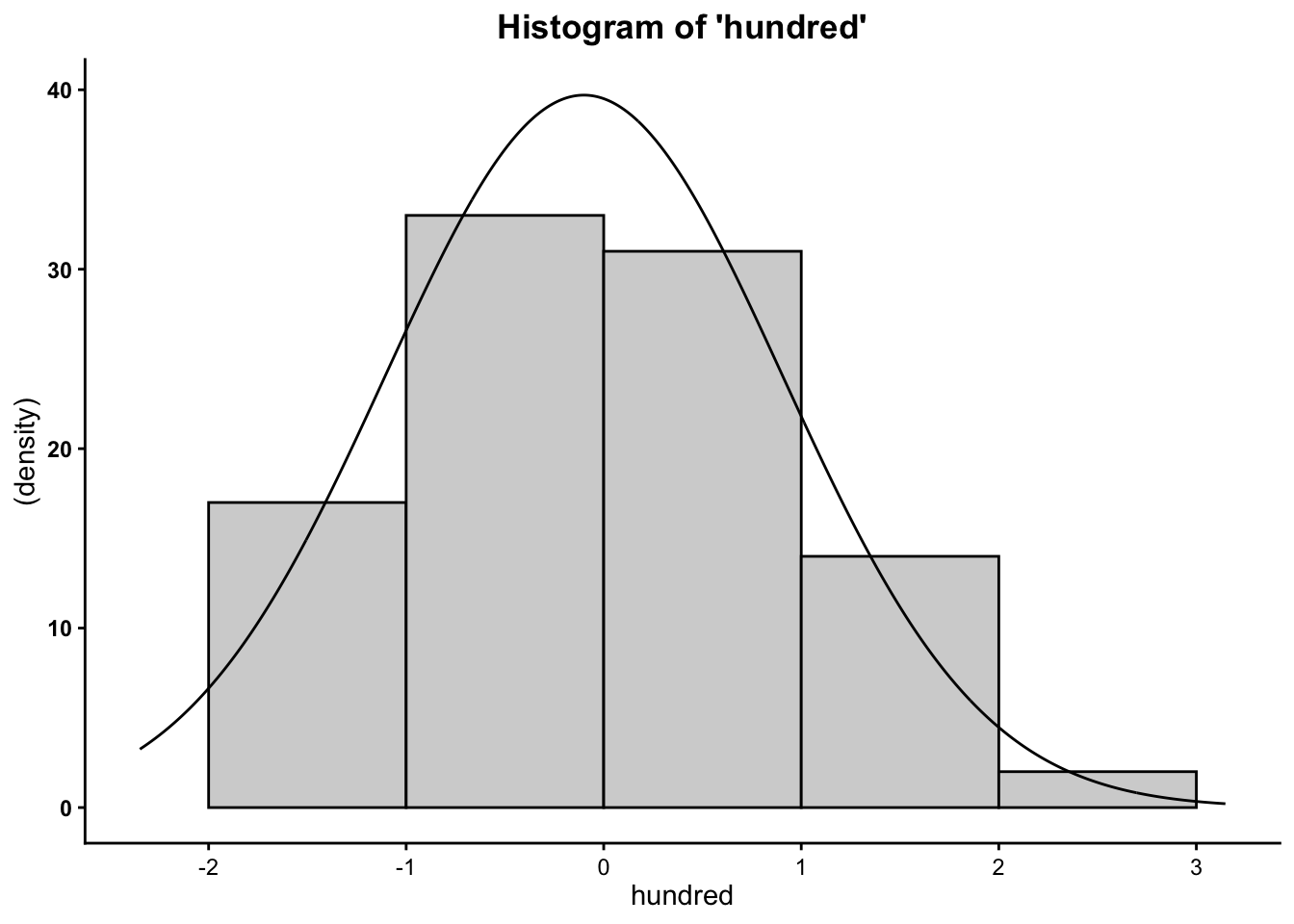

## [1] 0.02835545## [1] -0.01510033## [1] 1.025537## [1] -3.777051## [1] 3.206667## [1] 6.983718Finally, you can plot the histograms to see how they differ when altering the number of cases:

So now you know that the more cases/data points you have, the more your data will mimic the normal distribution (bell curve).