One Sample t-Test

Are there mean differences in the risk score

(risk_score) from our sample and the risk

score of the population of defendants?

Here, we’ll be working from the Defendants2025 data set, to

examine differences in the mean defendant’s risk score

(risk_score: measured as an interval-ratio

variable) in our sample and the (hypothetical) mean risk score for all

defendants in the population.

What is the One Sample t-Test?

The one sample t-test examines the differences in means between two groups – the mean of our sample and the mean in our population.

Assumptions and Diagnostics for the One Sample t-Test

The assumptions for a t-test are…

- Independence of Observations

- Normality

1. Independence of Observations (Examine Data Collection Strategy)

- Cases in our sample are independent of one another. Examine data

collection strategy to see if there are linkages between observations.

- Given that the

Defendants2025data have been randomly-sampled, we have met the assumption of independence of observations.

- Given that the

2. Normality (Examine Plots: Histogram, Q-Q Normality Plots, Box-and-Whiskers Plots)

- Distribution must be relatively normal. (If violated, use “unequal variances assumed” formula, otherwise, use “equal variances assumed”). In the past, you may have been instructed to use the Shapiro-Wilk test to assess normality. This is wrong. Unfortunately, tests such as these are overly-sensitive to trivial deviations from normality, and may result in you believing you must correct for normality by transforming your data. Please do not do this. The good thing is the t-test is super-robust – robust enough to provide results even in the presence of data that are not fully normally-distributed.

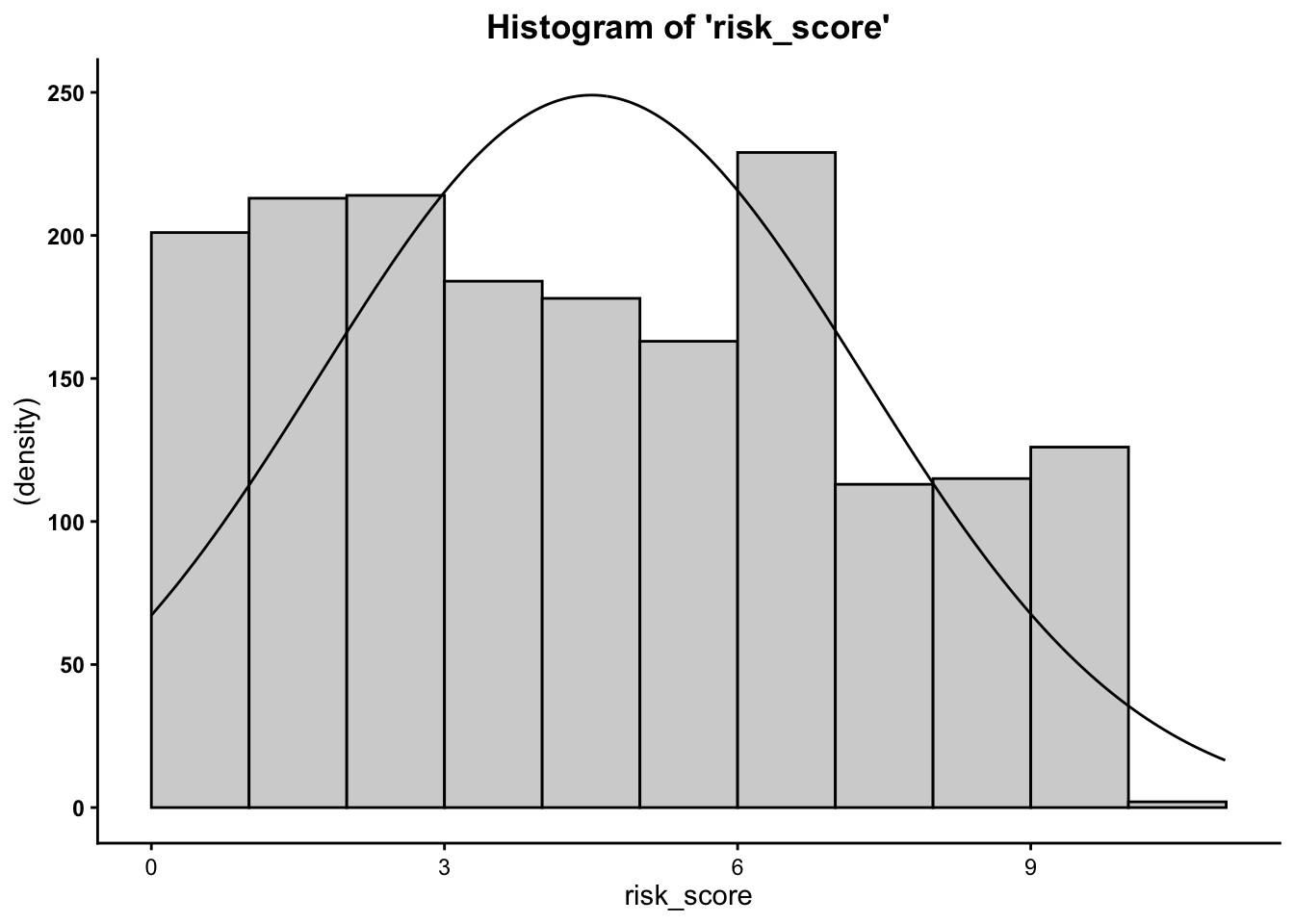

2a. Histogram

Plot the histogram for risk score (Y variable)…

- We can see from the histograms that the

distribution of the outcome variable (

risk_score) is relatively normal.

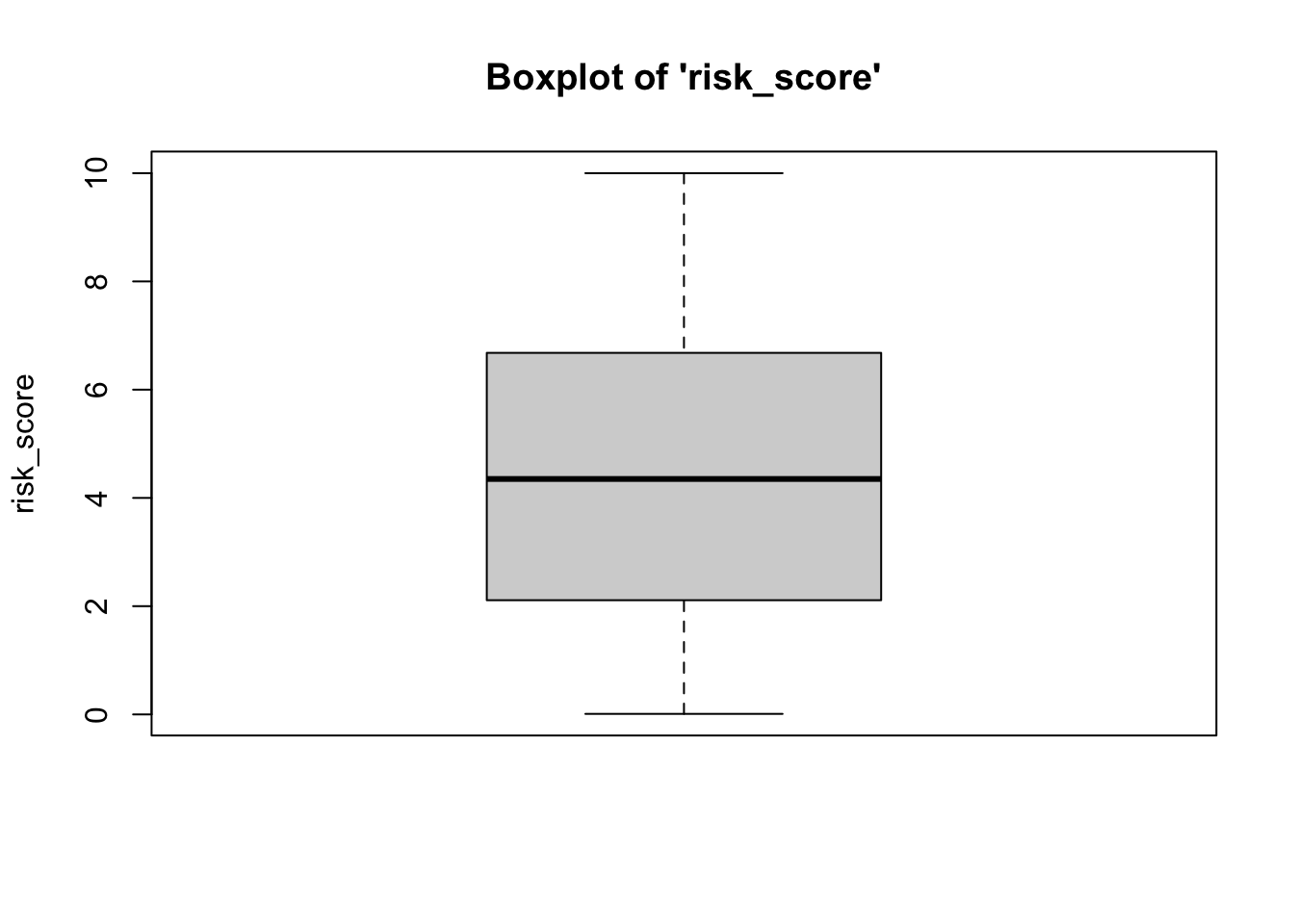

2b. Boxplots (Box-and-Whisker Plots)

Boxplots also provide a visual representation of the normality of a distribution. The boxplot has a box, a line through the box, two whiskers on either end of the box, and sometimes dots/points outside the whiskers. Below, we get a sense of what each part of the boxplot represents…

- Bottom (or left end) of the whisker represents the minimum score for that variable’s distribution

- Bottom (or left end) of the box represents the first quartile (the 25th percentile case)

- Middle line (or dot) inside the box represents the median, also known as the second quartile (the 50th percentile case)

- Top (or right end) of the box represents the third quartile (the 75th percentile case)

- Top (or right end) of the whisker represents the maximum score for that variable’s distribution

- Outside dots represent outliers - extreme high or extreme low values for that variable.

To tell if a variable is normally-distrubted using the box-and-whisker plot, generally, we want to see that there is some distance between the box and the end of the whiskers, that the box isn’t pushed too close to either whisker, that the median line (dot) is near the center of the box, and that there aren’t many outliers (dots) on the outside of the whiskers.

To plot a boxplot for risk score we do the

following…

- We can see from the boxplots that the data are normally-distributed: The median falls in the center of the interquartile range and that interquartile range is centered between the whiskers. It is safe to assume that these data are close enough to normal, since they aren’t drastically different from normal, and therefore safe to proceed with the statistical test.

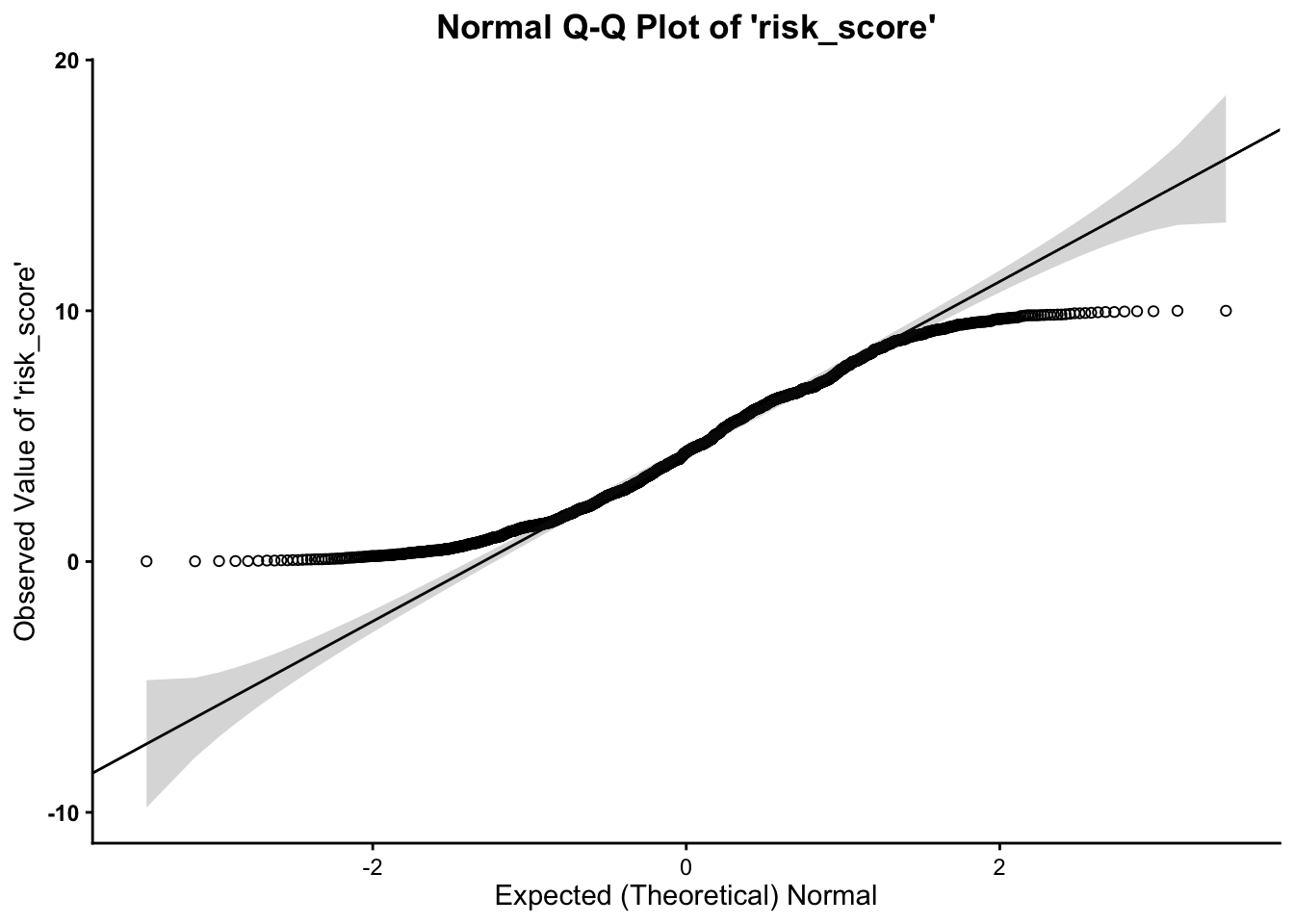

2c. Normal Q-Q (Quantile-Quantile) Plots

The quantile-quantile plot is a visual tool to help us figure out if the empirical distribution of our variable fits (or rather, comes from) a theoretical normal distribution.

We assess normality for risk score, using the

following

- We can see from the Q-Q plot that the

outcome variable (

risk_score) is somewhat normal, however, it is clear that the data tend to curl away from the normality line at the tails of the distribution. This indicates some deviation from normality. Therefore, it is safe to proceed with the statistical test.

- Across all three plots of

risk_score, the variable does not seem to drastically deviate from normality. Therefore,we can assume normality.

The One Sample t-Test Calculation

The calculation for the t-Test is:

\(t = \frac{\bar{x}-\mu_0}{\frac{SD}{\sqrt{n}}}\)

where…

- \(\bar{x}\) is the mean for the

sample

- \(\mu_0\) is the mean for the

population

- \(SD\) is the standard deviation

for the sample

- \(n\) is the sample size

In addition, the degrees of freedom (\(df\)) for the test is…

\(df = n - 1\)

Running the One Sample t-Test in R

To run the one sample t-test in R, we use the traditional t.test function. But, in the

vannstats package, we can

use the os.t.

Within the os.t

function, the data frame is listed first, followed by the

(interval-ratio level) variable for the sample, followed by the

(interval-ratio level) mean value for the population listed second.

If you meet the assumptions of the one sample t-test, you can

assume equal variances, which is assumed by default in

the function (using the call var.equal=TRUE). If you violate

this assumption, you must add the following call to the function: var.equal=FALSE.

## Call:

## os.t(df = data1, var1 = risk_score, mu = 3.6)

##

## One Sample t-test:

##

## 𝑡 Critical 𝑡 df p-value

## 13.567 1.961 1737 < 0.00000000000000022 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Sample and Population Means:

## x̅: μ:

## 4.506024 3.600000In the output above, we see the t-obtained value -13.567, or rather, \(\pm\) 13.567), the degrees of freedom (1737), and the p-value (.00000000000000022, which is less than our set alpha level of .05).

To interpret the findings, we report the following information:

- The test used

- If you reject or fail to reject the null hypothesis

- The variables used in the analysis

- The degrees of freedom, calculated value of the test (\(t_{obtained}\)), and \(p-value\)

- \(t(df) = t_{obtained}\), \(p-value\)

“Using a one sample t-test, I reject/fail to reject the null hypothesis that there is no mean difference between our sample and the population, \(t(?) = ?, p ? .05\)”

- “Using a one sample t-test, I reject the null hypothesis that there is no difference between the mean risk score for those in our sample and the mean risk score of those in the population, \(t(1737) = \pm 13.567, p \lt .05\)”